The $25K Bloomberg Terminal Couldn't Track Stablecoins. My AI Analyst Does, For Free.

The open-source architecture that aggregates, synthesizes, and escalates stablecoin data without the $25,000/year price tag.

There is no Bloomberg Terminal for stablecoin intelligence. Not one that captures on-chain flows, DeFi yields, macro context, real-time wallet movements, and regulatory shifts, then synthesizes it all into a coherent daily brief. Bloomberg costs $25,000/year and still misses 80% of the signals that matter in a market moving at warp speed.

The stablecoin market has quietly scaled into a $300B+ asset class clearing over $30T in annual transactions, with banks now green‑lit under the U.S. GENIUS Act to hold regulated stablecoins on balance sheet and RWAs forecast to exceed $3T by 2030, the pipes are here, but nobody is aggregating the signal layer yet.

The data exists. It’s just fragmented across DeFiLlama, Dune, FRED, SEC EDGAR, dozens of news feeds, and countless protocol APIs. The problem isn’t data scarcity; it’s synthesis and actionable interpretation.

I needed an edge. So, I built it.

This isn’t a tutorial on “using AI for research.” This is the actual system I built, running daily, that aggregates every critical stablecoin data point (on-chain, TradFi, macro, regulatory, news) and synthesizes it into a coherent, sell-side quality brief. It costs me ~$0-$5/day, depending on how often I use the AI synthesis.

Here’s everything.

What This System Actually Produces

My goal was simple: a daily, comprehensive intelligence brief that covers the entire stablecoin ecosystem, plus a weekly Goldman-style research report.

Output 1: The Daily Intelligence Brief

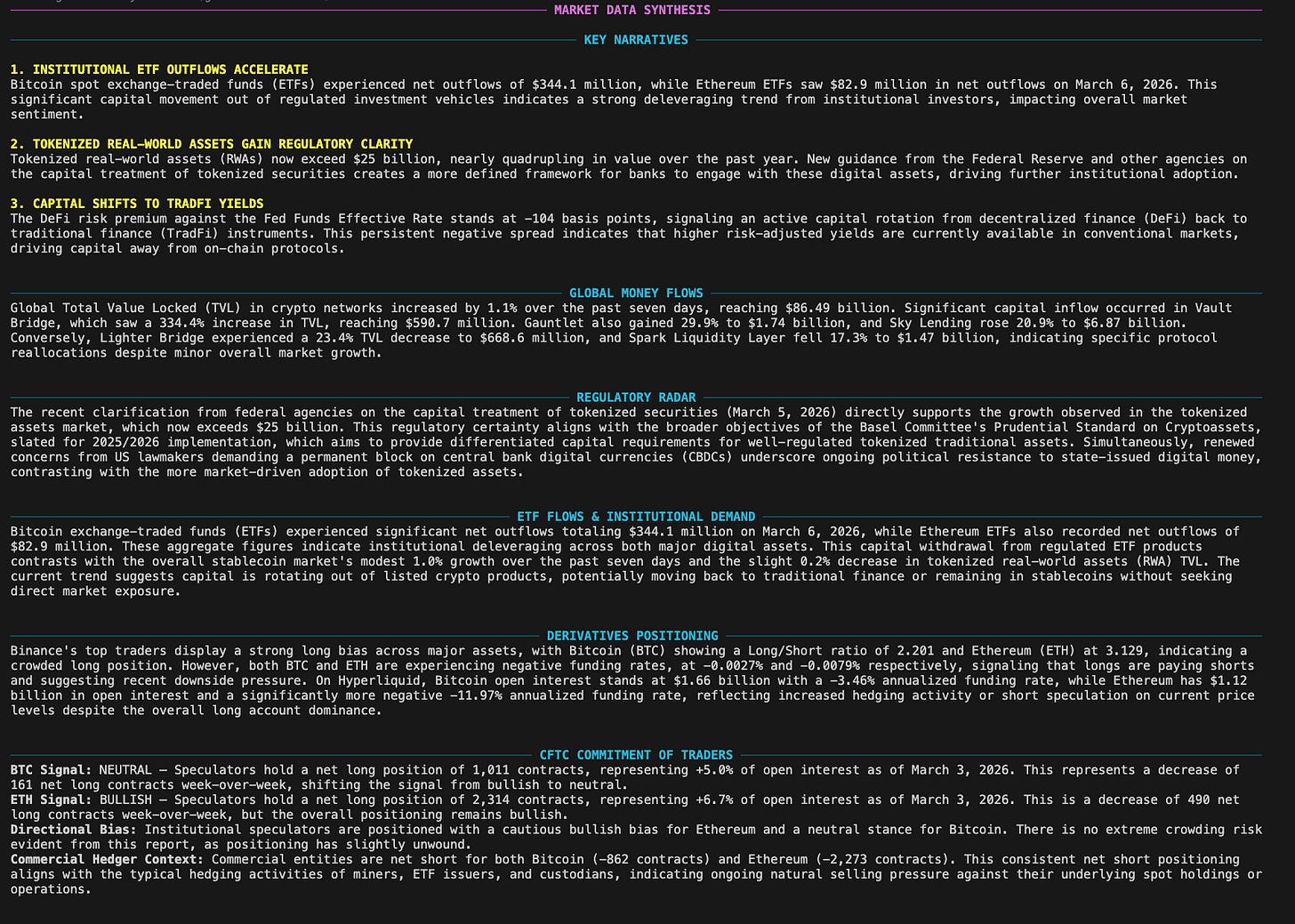

Every morning, I run python run_briefing.py. This fires off a cascade of data fetches and 20 RSS feed subscriptions, then feeds the raw data into Google Gemini 2.5 Flash for a multi-section institutional analysis. The output is a terminal-rendered dashboard, emailed to my inbox, and even converted into a podcast.

It covers 25 distinct intelligence categories:

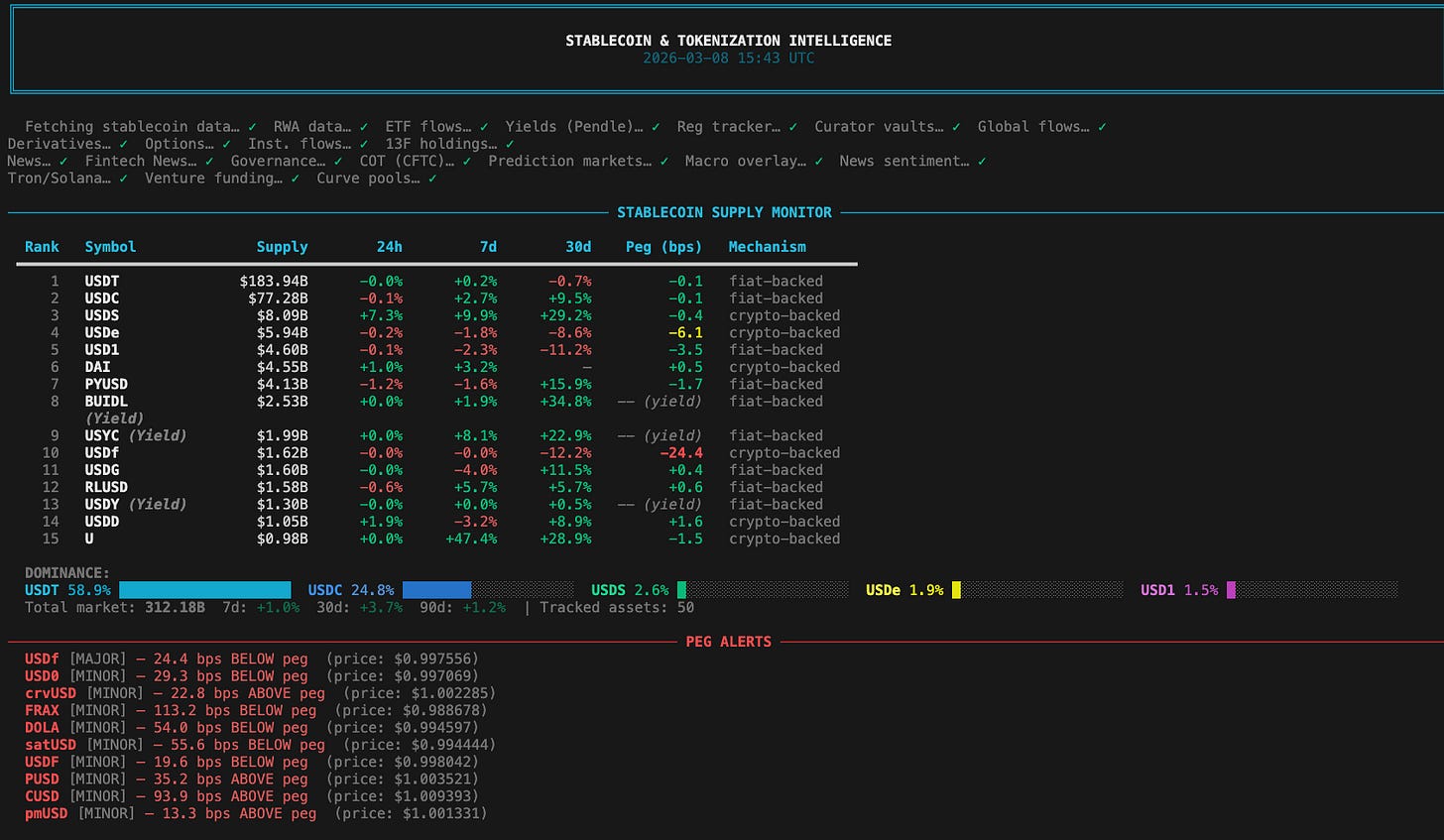

Stablecoin Supply Monitor: Top 15 stablecoins by supply, % changes, peg deviation, mechanism type.

Peg Alerts: Any stablecoin >10bps off peg, with severity and risk explanation.

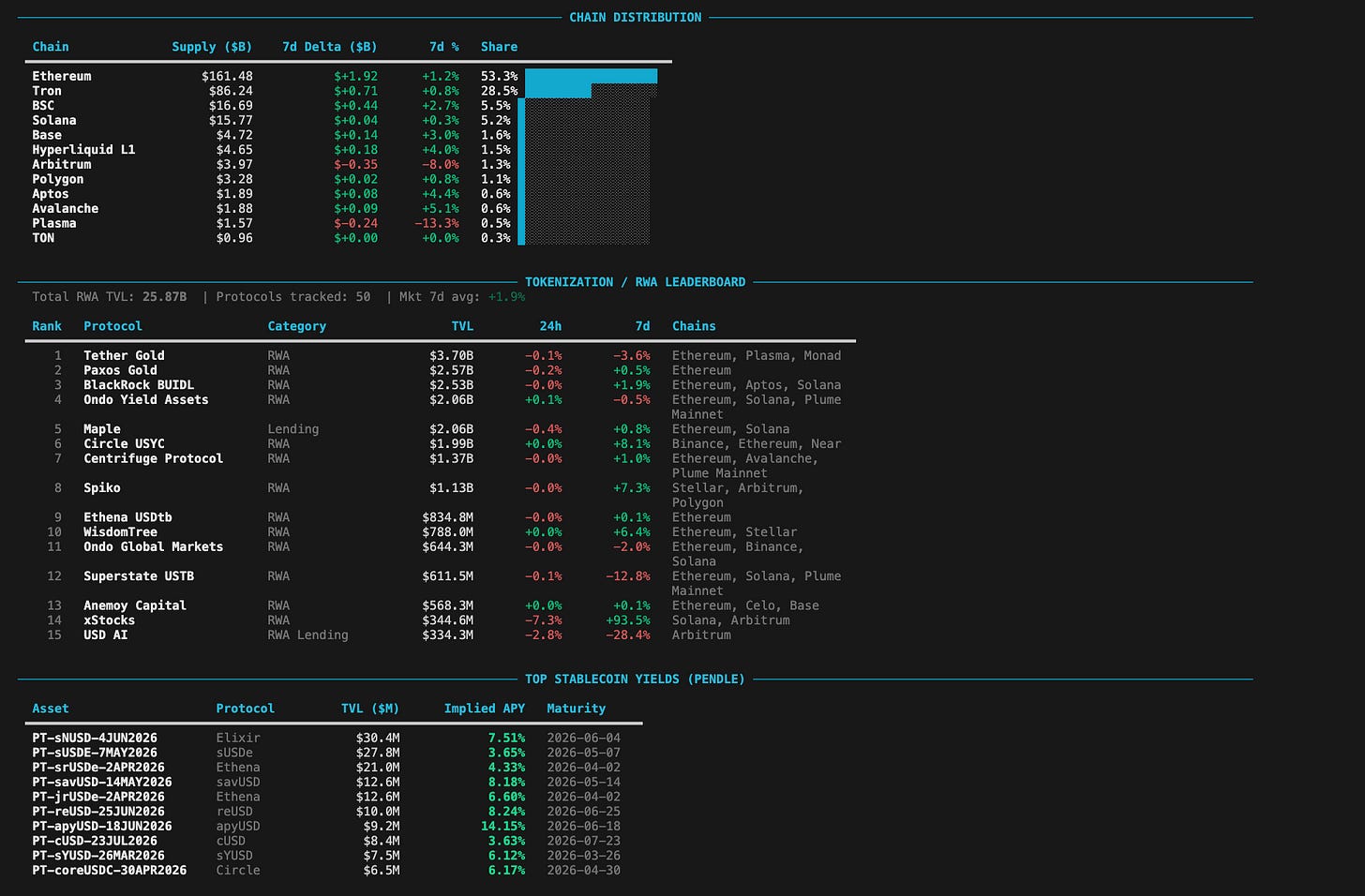

Chain Distribution: Where stablecoin supply lives, with 7d growth rates (Base/Solana = retail/DeFi; Tron = EM/OTC).

RWA/Tokenization Leaderboard: Top 15 protocols by TVL with 7d momentum.

Pendle Yield Markets: Stablecoin derivative yield curves across DeFi.

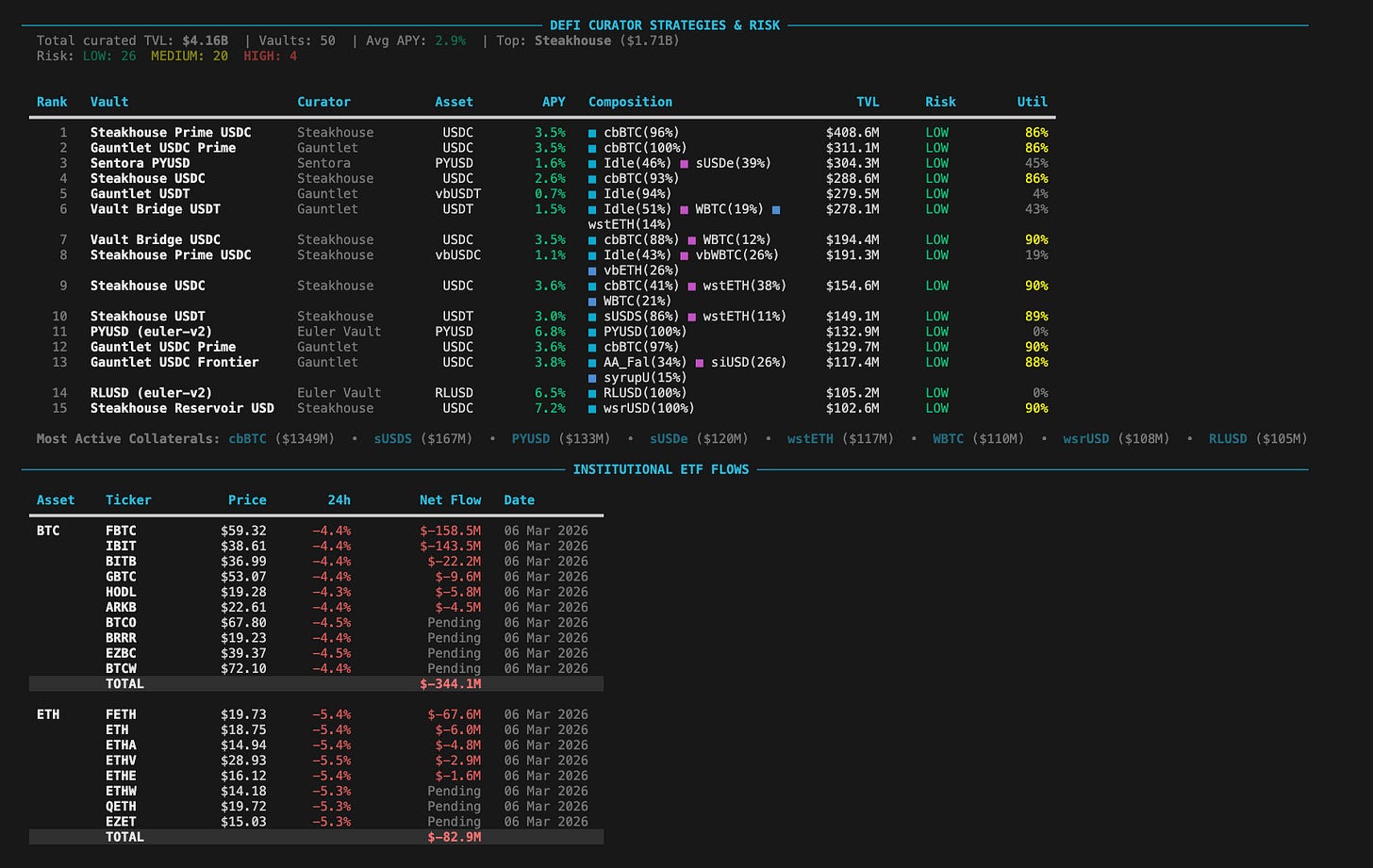

DeFi Curator Risk Dashboard: Morpho Blue vault risk scores (APY, TVL depth, curator reputation, utilization, chain maturity).

ETF Flows: Daily BTC and ETH ETF net flows with aggregate totals.

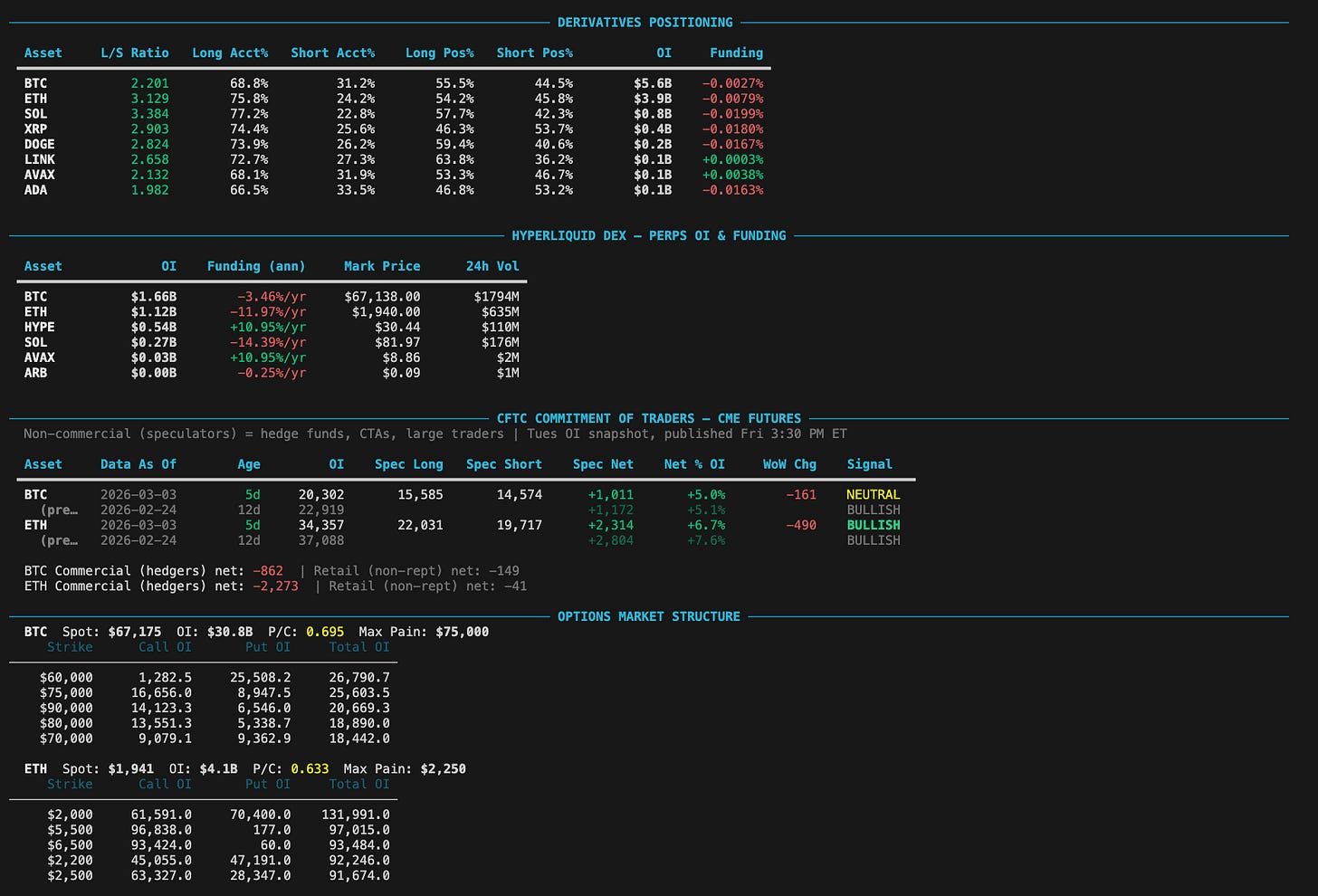

Derivatives Positioning: Binance top-trader long/short ratios for major assets.

Hyperliquid Perpetuals: OI + funding rates for BTC/ETH/SOL/HYPE/ARB/AVAX.

CFTC Commitment of Traders: CME managed money and commercial hedger net positions for BTC/ETH futures.

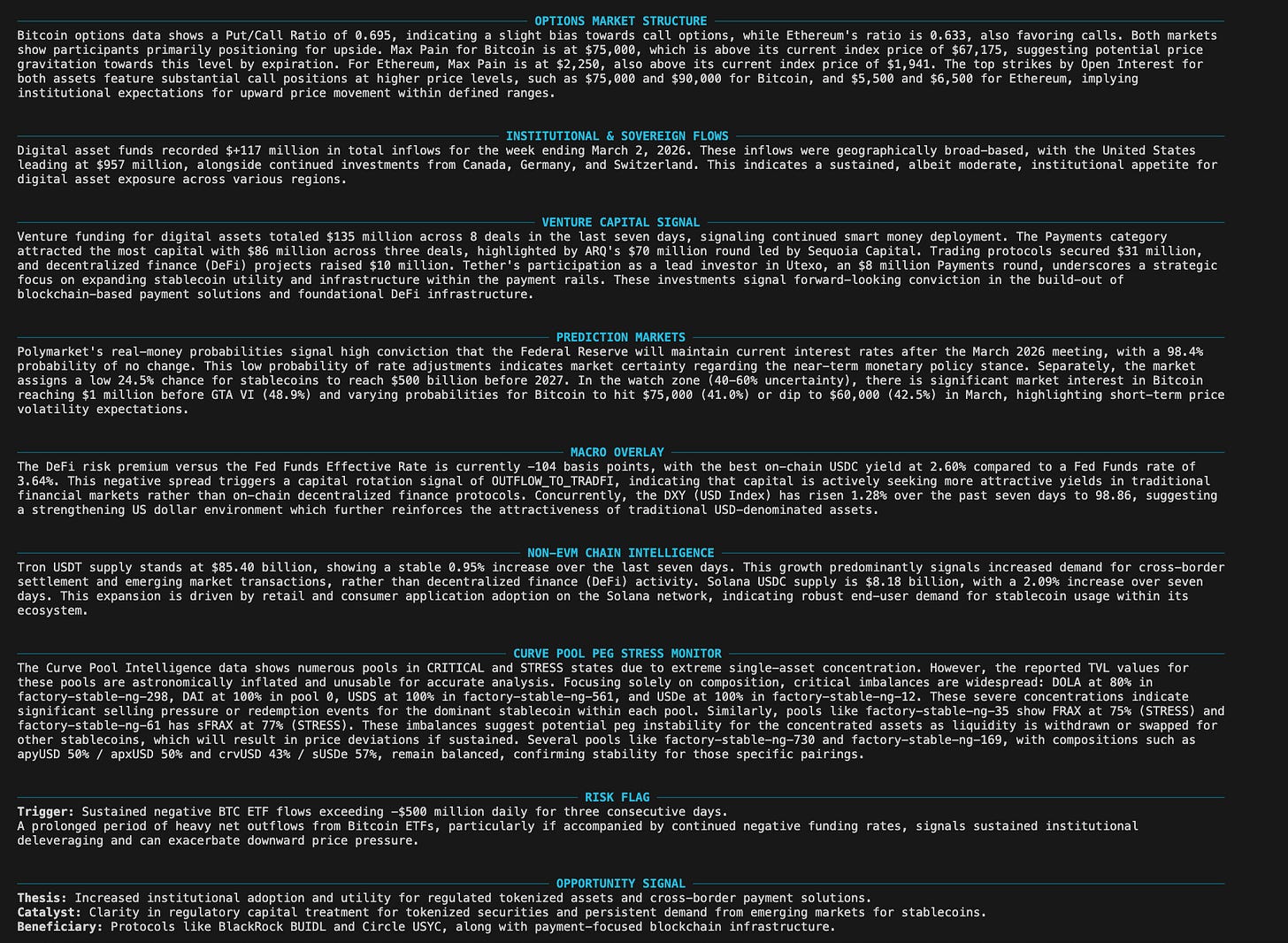

Options Market Structure: Deribit BTC/ETH put/call ratios, max pain, top strikes by OI.

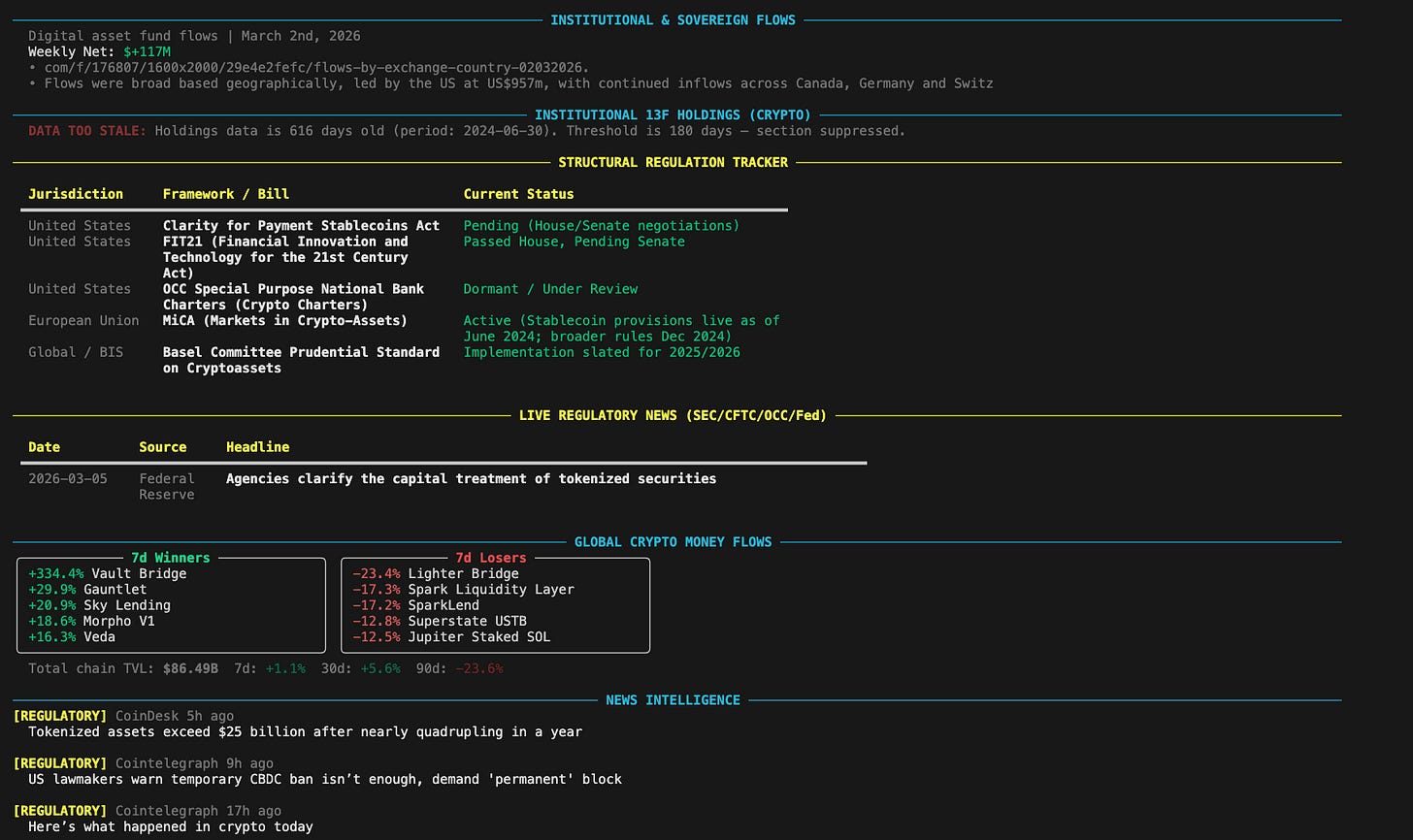

Institutional Fund Flows: CoinShares weekly digital asset fund flow data.

13F Holdings Tracker: SEC EDGAR institutional crypto holdings (with explicit 45-135 day data-lag warnings).

Legislation Tracker: Static bill status for GENIUS Act, STABLE Act, FIT21, MiCA.

Live Regulatory News: SEC/CFTC/OCC/Fed/FinCEN RSS feeds, filtered.

Global Money Flows: DeFiLlama TVL flows showing capital rotation.

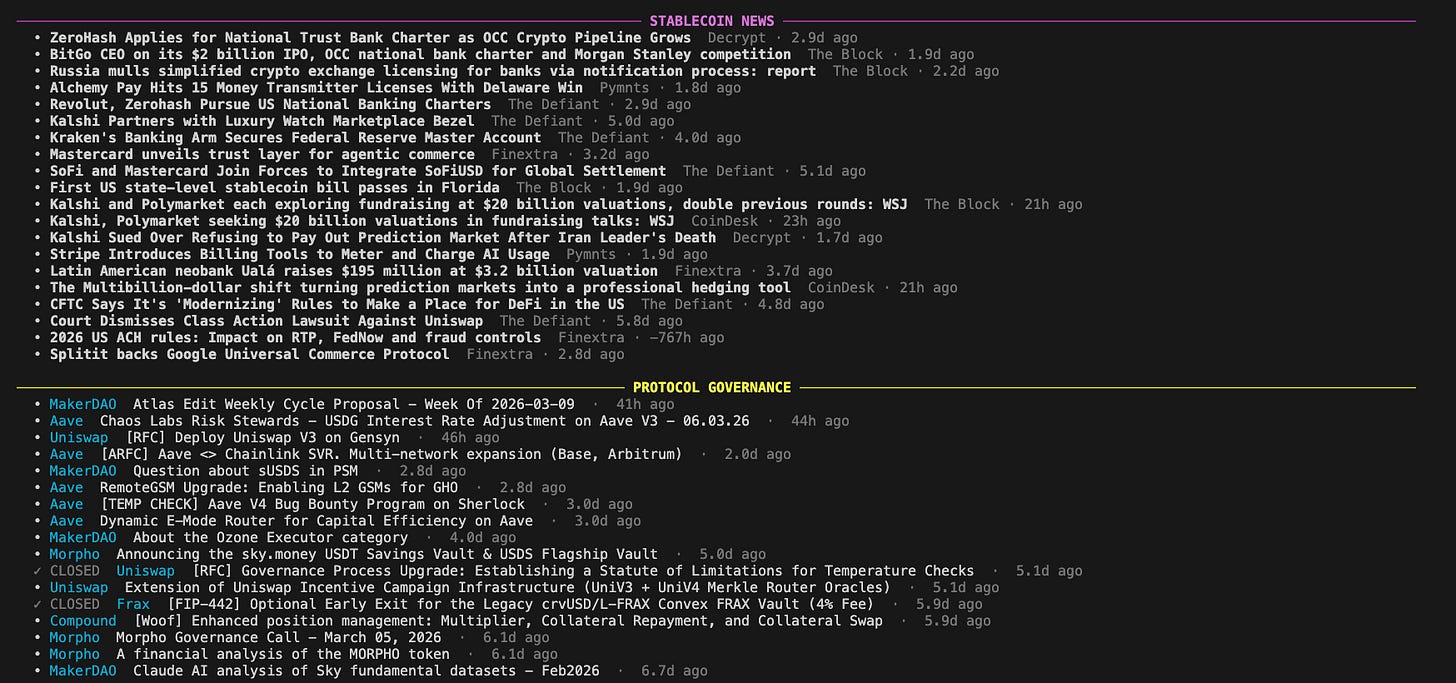

Crypto/Fintech News: Priority-sorted headlines from 20 RSS subscriptions across 8 stablecoin-focused and 12 fintech/banking sources.

Governance Signals: Active Snapshot proposals across major DeFi protocols.

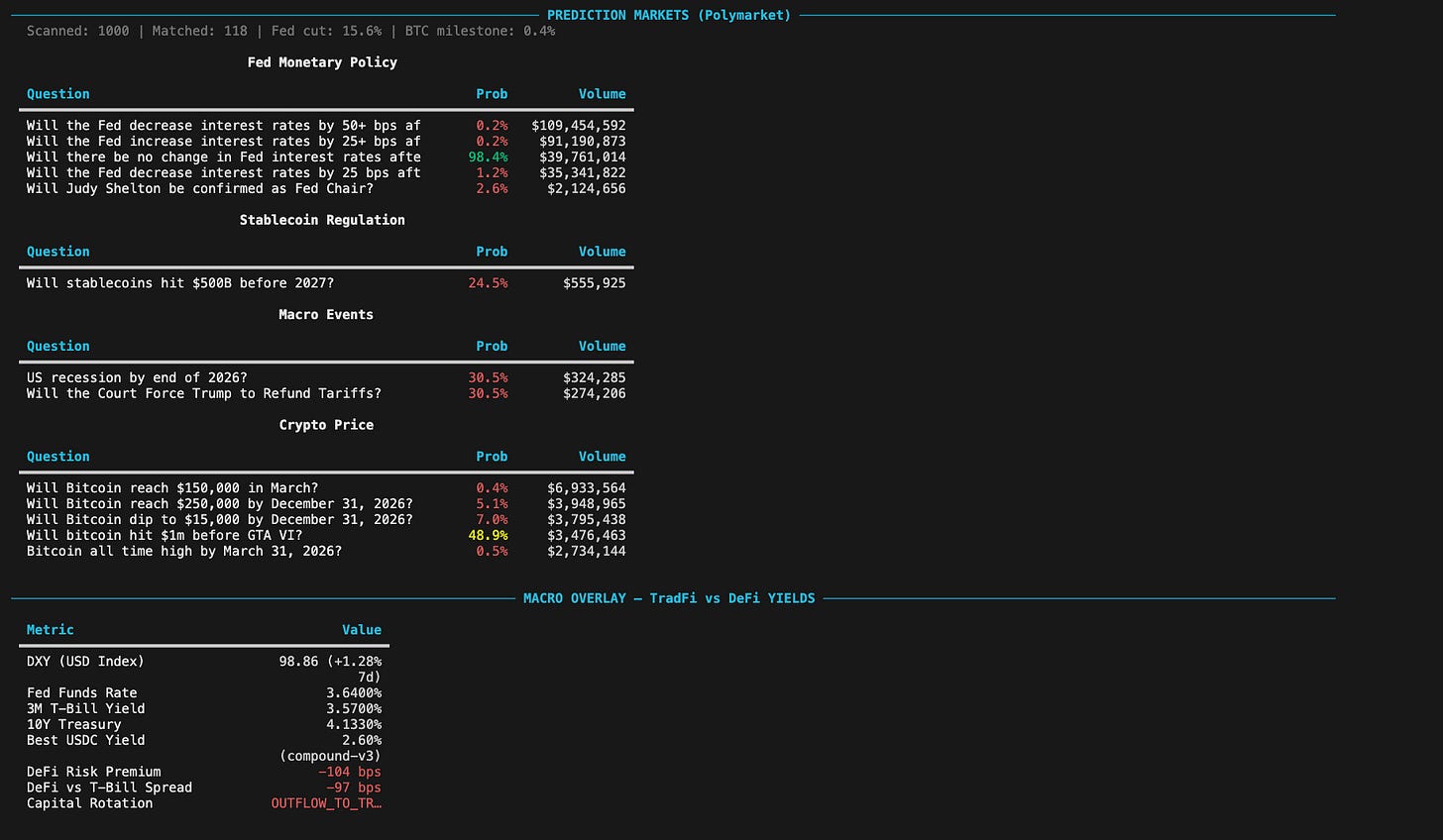

Prediction Markets: Polymarket real-money probabilities on key crypto/macro events.

Macro Overlay: DXY, Fed funds, T-Bill/Treasury yields vs on-chain USDC lending rates, with DeFi risk premium.

News Volume Signal: RSS keyword frequency analysis (now specifically counting headlines to gauge concentration).

Non-EVM Chain Intelligence: Tron USDT (EM settlement) and Solana USDC (retail demand) supply and velocity.

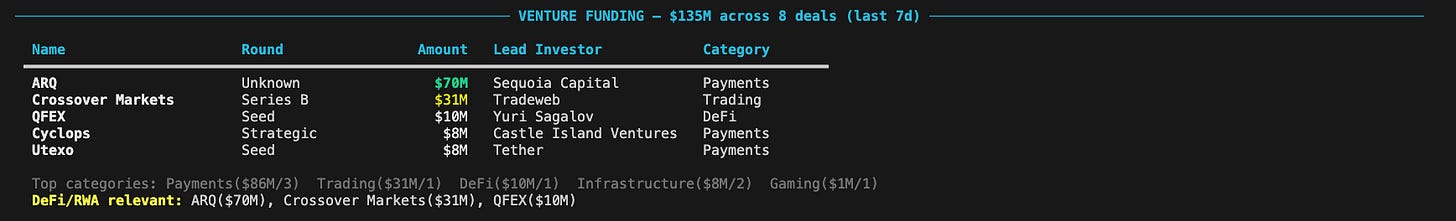

Venture Funding Signal: Daily tracking of deals, top categories, and top investors from DeFiLlama raises.

Curve Pool Peg Stress Monitor: Early warning capital flight detection across Curve stablecoin liquidity pools (30-60m ahead of oracles).

Gemini AI Synthesis: A multi-section institutional analysis, like a sell-side morning note, covering key narratives, global money flows, regulatory radar, and more.

(Note: Reserve Attestation monitoring exists in the codebase as a standalone tool, but is kept out of the automated daily brief to maintain brevity.)

Additional features:

Historical comparison: Each run is snapshotted and compared against the prior run for day-over-day changes.

Data feed health dashboard: Every data source is tracked. Failures are explicitly flagged in the Gemini prompt so the AI never hallucinates over missing data.

Hallucination guard: Cross-references Gemini output numbers against source data.

Eval scoring: Automated quality scoring of synthesis output.

Email delivery: Sends the synthesis via Resend.

Podcast generation: Converts the briefing into an audio podcast via Google NotebookLM.

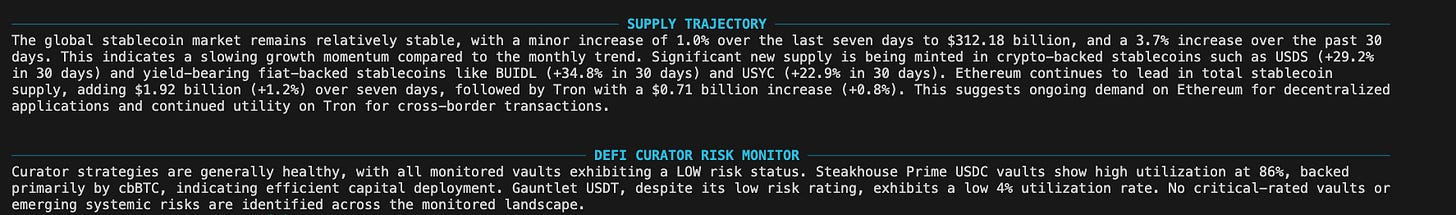

Output 2: The Weekly Sell-Side Research Report

For deeper dives, I run python run_research_report.py. This generates a 3,000-5,000 word Goldman-style weekly research note. It’s what I use for deeper understanding of the current market and could be used for LP letters, or investment committee memos.

It covers:

Executive Summary

Investment Themes 1-3 (thematic theses with evidence and catalysts)

Market Structure Analysis (stablecoin flows, CEX signals, on-chain velocity)

Capital Formation Tracker (RWA, ETFs, institutional inflows, DeFi yields)

Regulatory Landscape (live legislation, enforcement trajectory)

Derivatives Positioning (long/short ratios, funding rates, options skew)

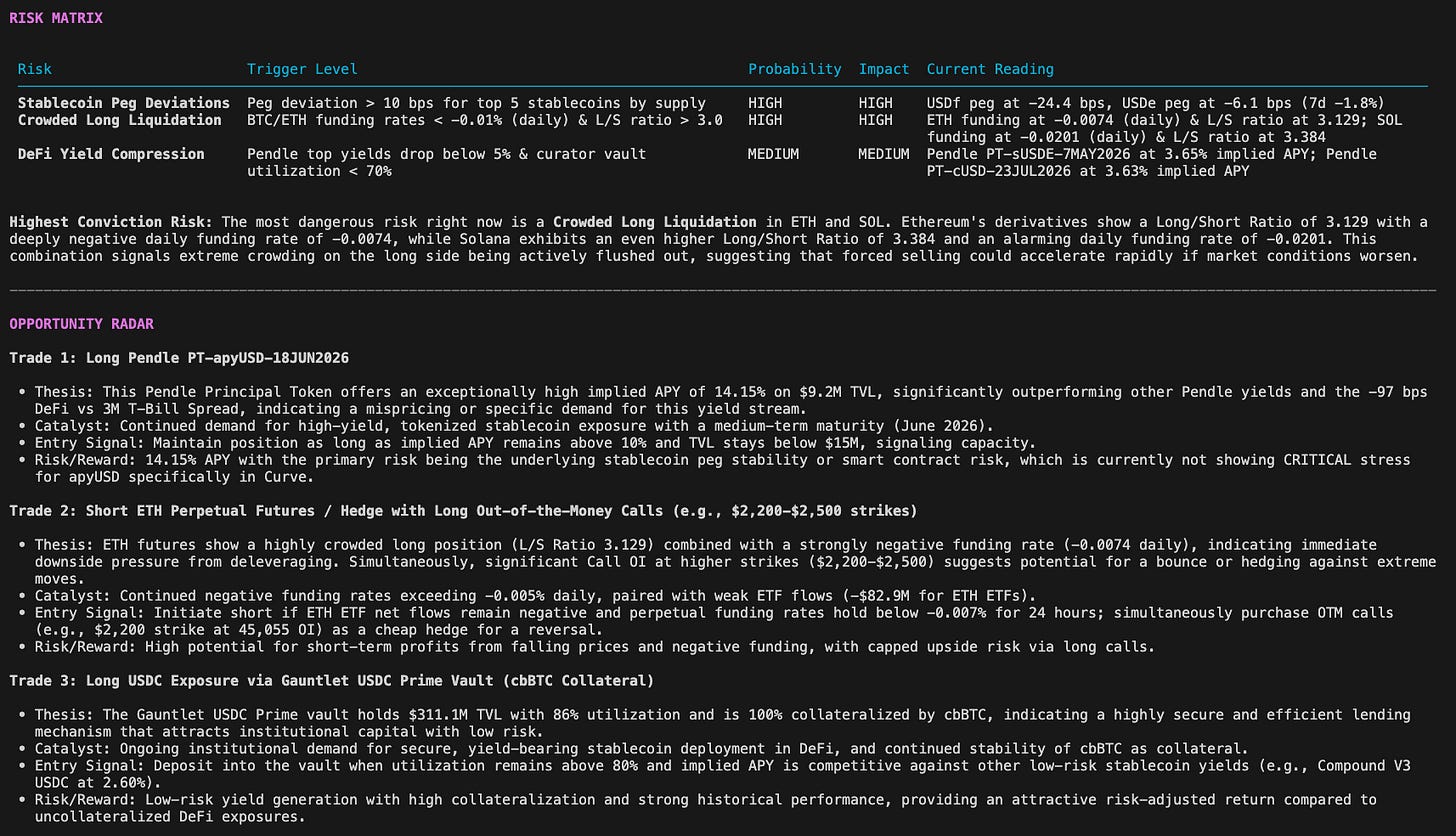

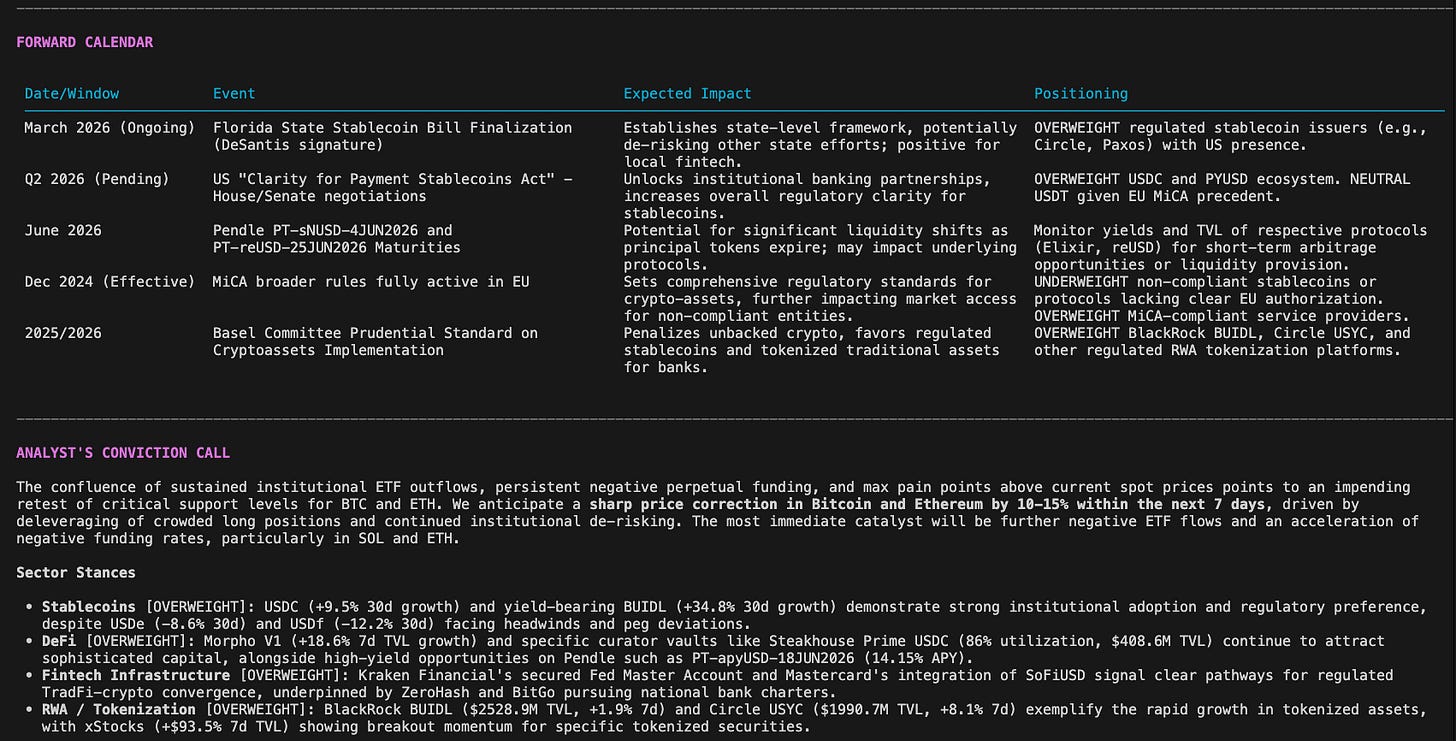

Risk Matrix (top 3 risks with measurable trigger levels)

Opportunity Radar (top 3 trade ideas with specific entry signals)

Forward Calendar (binary events and positioning)

Conviction Call (one directional view)

It’s also flexible, with focus modes for specific deep-dives:

python run_research_report.py --focus stablecoins

python run_research_report.py --focus defi

python run_research_report.py --focus fintech

python run_research_report.py --focus capital_formationFull report accessible below.

The Problem This Solves, And Why I Built It

As I mentioned, the existing options fall short:

Bloomberg Terminal: $25,000/year. Doesn’t cover DeFi natively. It’s a TradFi tool, not a crypto-native one.

The Block: $599/year. Good data, but zero on-chain wallet intelligence. It’s a news aggregator with some analytics, not a synthesis engine.

Nansen: $1,000+/year for whale wallet feeds. Valuable, but no macro context, no regulatory overlay, and no automated synthesis.

Manual Excel + DeFiLlama: This used to be my life. About 3 hours a day, just pulling data. Zero AI synthesis, zero real-time alerts. That’s 15 hours a week, or nearly a full day, spent on data collection, not analysis.

I built this system because I was tired of paying exorbitant fees for incomplete data, or spending half my day as a data entry clerk. This system costs between $0 and $5 per day, and it runs itself.

The Architecture: Workflows, Agents, Tools (WAT)

This system is built on the WAT framework: Workflows (markdown SOPs) → Agents (AI that reads workflows and coordinates) → Tools (deterministic Python scripts). Probabilistic AI handles reasoning; deterministic code handles execution. Simple, robust, and scalable.

├── workflows/

│ ├── daily_stablecoin_briefing.md # Step-by-step SOP for daily briefing

│ ├── sell_side_research_report.md # Research report SOP

│ └── daily_retrospective.md # Post-run quality review SOP

├── tools/ # 41 Python scripts, one concern per script

│ ├── briefing_engine.py # Daily briefing orchestrator (2,700 lines)

│ ├── research_report_engine.py # Research report orchestrator

│ ├── stablecoin_data.py # DeFiLlama: supply, peg prices, chain distribution

│ ├── etf_data.py # Finnhub + Yahoo Finance: BTC/ETH ETF flows

│ ├── news_fetcher.py # 8 crypto RSS feeds with keyword relevance scoring

│ ├── hallucination_guard.py # Cross-references AI output numbers

│ └── ... (35 more data fetchers, utils)

└── shared-context/

├── THESIS.md # Core investment thesis (AI worldview)

├── SIGNALS.md # Weekly metrics tracker

└── FEEDBACK-LOG.md # Standing corrections for Gemini synthesisbriefing_engine.py is the nerve center for the daily brief. It orchestrates everything: fetches data from 25+ sources, renders Rich terminal tables, builds text blocks, assembles a ~4,000-word structured prompt, calls Gemini 2.5 Flash for synthesis, validates the output with a hallucination guard, runs an eval scorer, and delivers via email.

research_report_engine.py imports all the block builders from briefing_engine, no duplication. It reuses the data fetching logic but applies a different Gemini prompt template optimized for long-form, thematic research. This “import and augment” pattern is crucial for maintaining a lean codebase.

Graceful Degradation: A core principle. If a key is missing or an API fails (e.g., FRED or Dune), that data section renders “UNAVAILABLE.” The Gemini prompt explicitly instructs the AI to flag the gap and never infer from missing data. This prevents silent failures and AI hallucinations.

The Data Sources: Free APIs + Robust Aggregation

The secret is aggregation and intelligent synthesis. Every piece of data needed is publicly available, often via free API tiers:

On-chain: DeFiLlama (stablecoin supply, TVL, yields, RWA, Venture Funding), Tronscan, Solscan (non-EVM chain intelligence), Snapshot (governance), Curve Finance (pool balance monitoring).

DeFi Markets: Pendle Finance (yield curves), Morpho Blue (curator vaults).

Derivatives: Binance (top-trader long/short), Hyperliquid (perpetuals), Deribit (options). Institutional Positioning: SEC EDGAR (13F filings), CoinShares (weekly fund flows), CFTC (Commitment of Traders).

Macro Overlay: FRED (Fed funds, DXY, T-Bills), Yahoo Finance (Treasury yields).

Prediction Markets: Polymarket (real-money probabilities).

Regulatory: SEC, CFTC, OCC, Federal Reserve, FinCEN (RSS feeds), plus a static legislation tracker.

News: 20 RSS subscriptions across 8 stablecoin-focused feeds (The Block, CoinDesk, Blockworks, Decrypt, DLNews, Cointelegraph, The Defiant, Unchained) and 12 fintech/banking feeds (Finextra, Pymnts, American Banker, TechCrunch Fintech, Fintech Futures, Sifted, and crossover sources), with keyword frequency scoring.

Standalone Data Tools (On-Demand / MCP Integrations): Direct monitoring of Circle and Tether reports (Reserve Attestation), forward-looking liquidation cascade risk tiers, and real-time wallet flows via Dune Analytics. These are positioned outside the daily automated run to manage execution speed and cost.

The beauty of the system is that you can get a robust core briefing running with zero paid API keys. The majority of the system relies on publicly available endpoints and degrades gracefully when optional keys are absent.

The Dune MCP Skill (Requires Paid Key)

Beyond the fixed Python data pipeline, the system integrates the Dune Model Context Protocol (MCP) server. This is the secret weapon: it allows the AI (Claude or any MCP-compatible LLM) to run live Dune SQL queries on demand, mid-conversation.

This unlocks dynamic, real-time on-chain intelligence. Instead of just static data, I can ask Claude things like:

“What’s the CEX inflow/outflow signal right now?” → Claude runs the SQL directly against Dune and interprets.

“Are there any tokens with >200% volume acceleration today?” → Runs the emerging token traction query, interprets.

“Show me all transfers >$10M in the last 24h with entity labels” → Returns Binance, Tether Treasury, protocol wallets identified by name.

I’ve pre-built 10 critical queries into the library.

The CEX Flow Signal is particularly powerful: net inflow >$100M suggests RISK-OFF (stablecoins accumulating at exchanges = sell pressure); net outflow >$100M signals RISK-ON (capital deploying into DeFi/crypto).

dune_onchain_data.py in the repo: 8 SQL queries covering mint/burn net flows, chain volume, whale concentration, labeled large transfers (>$10M), CEX inflow/outflow, DEX stablecoin volume, and cross-chain bridge flows. Because Dune execution is constrained by cost/speed on free or low-tier accounts, I decoupled these queries from the automated daily brief pipeline and positioned them alongside the MCP server for on-demand use only.

The Investment Thesis Powering It All

Instead of being a simple data aggregator, the system is an opinionated analyst. Its core analysis is calibrated around a single belief:

Stablecoins are the dollar system being rebuilt on open rails. The $300B+ stablecoin market is not a crypto phenomenon; it is the first phase of a multi-decade restructuring of how dollars move globally.

This informs every interpretation. For example:

USDC wins in regulated markets, USDT wins in unregulated ones. They serve different markets; both grow.

The yield layer is the next battleground. Who can offer the best return on on-chain dollars? Pendle, Ethena, BlackRock BUIDL are key.

Bank-DeFi convergence is inevitable. Hybrid entities with both a bank charter and DeFi composability accrue the most value.

The regulatory calendar is the dominant price driver. GENIUS Act, EU MiCA Phase 2, HKMA/MAS licensing – these create binary outcomes.

Non-EVM chains are undermonitored. Tron USDT velocity = EM cross-border settlement demand; Solana USDC growth = retail/consumer app adoption.

This thesis translates into strict Analyst Quality Standards for the AI’s output, baked into the prompt and FEEDBACK-LOG.md:

Never write: “could potentially impact,” “remains to be seen,” “the market may react.”

Always write: Specific numbers (”USDC supply grew $2.3B (+5.4%) in 7 days, driven by Base chain (+$1.8B)”), causal chains (”Tron stablecoin supply grew 3.2% this week — consistent with EM settlement demand ahead of [catalyst]”), direct implications (”The GENIUS Act markup session next week is the binary event...”).

Key data interpretation rules: Tron USDT is an EM settlement signal, not DeFi. Yield-bearing stablecoins (sUSDe, BUIDL) trading above $1.00 are intentional, not peg deviations. 13F data is 45-135 days stale – always cite period_of_report. News volume signal is keyword scoring, never “sentiment analysis.”The system also knows when to interrupt, not just summarize. These Escalation Thresholds trigger immediate alerts:

Any stablecoin >50bps off its USD peg.

Total stablecoin market cap drops >$5B in 24 hours.

SEC/Treasury enforcement action against a stablecoin issuer.

Stablecoin issuer announces redemption pause or reserve audit failure.

Congress votes on stablecoin legislation.

Any Morpho curator vault with >$100M TVL changes risk level to HIGH or CRITICAL.

Curator vault utilization exceeds 95% on a >$50M vault.

CEX net stablecoin inflow/outflow exceeds +/-$500M in 24h.

Any token shows >500% DEX volume acceleration in 24h.

What I Got Wrong (and How I Fixed It)

Building a system like this isn’t a straight line. I hit two major roadblocks early on that taught me critical lessons.

1. The Hallucinating AI (Over Missing Data)

My initial version of the AI synthesis was a little too confident. If, for instance, the CoinShares API for institutional_flows_data.py failed to return data, Gemini would still happily generate a paragraph about “institutional fund flows,” often inventing numbers or trends out of thin air. It was drawing conclusions from a void.

The fix involved a two-pronged approach:

Data Feed Health: I implemented

data_quality.pyto actively track the availability and success of every single data source.Explicit AI Instruction: The Gemini prompt was fundamentally redesigned. Now, before any synthesis, the prompt includes a “Data Feed Health Dashboard” section. If

institutional_flows_data.pyfailed, the prompt would explicitly state: “Institutional Fund Flows: UNAVAILABLE (API failure).” Crucially, the prompt then instructs Gemini: “If a data feed is explicitly marked as UNAVAILABLE, you MUST render that section as ‘UNAVAILABLE’ in your synthesis and NEVER infer or fabricate data for it.“ This eliminated hallucinations by making the AI aware of its own blind spots.

2. Misinterpreting “Yield-Bearing” Stablecoins

Early iterations of the peg deviation monitor were too simplistic. They’d flag tokens like sUSDe or BlackRock’s BUIDL trading at $1.002 as a minor “peg deviation.” While technically >0bps from $1.00, it was analytically incorrect. These tokens are designed to accrue value above $1.00.

The solution:

is_yield_accruingField: I added anis_yield_accruingboolean field to thestablecoin_data.pyfetcher. This field is set toTruefor known yield-bearing stablecoins.Intelligent Peg Logic: The peg deviation detection logic (

peg_alertssection) now checks this field. Ifis_yield_accruingisTrue, the token is explicitly exempted from peg deviation alerts. The Gemini prompt also received context on this, ensuring the AI understood why certain tokens are allowed to trade above $1.00.

These weren’t just bug fixes; they were fundamental calibrations that transformed the system from a smart script into a reliable analyst.

Known Gaps/Roadmap

No system is perfect. Here’s what’s on the roadmap — or explicitly not in the automated pipeline yet:

No real-time mint/burn event stream: Would require a real-time Dune query on ERC-20 zero-address transfers triggering on each block, rather than the current daily aggregation.

No EM stablecoin premium tracker: P2P USDT premium in Turkey/Argentina/Nigeria would be high-signal. The data exists on local P2P exchanges; the scraper doesn’t yet. Sentiment is RSS keyword scoring, not true

NLP: Real sentiment requires X/Twitter API or Kaito.ai integration. What the system does is frequency analysis, useful for volume signal, not valence (though we now count actual headlines triggered to prevent noise).

RWA tracking is TVL only: Ondo/Superstate publish NAV/yield via API (not yet integrated).

Dune on-chain data is off-loaded: Due to execution costs and speed limits on the automated daily run, Dune SQL intelligence has been extracted out to the MCP server for on-demand use only.

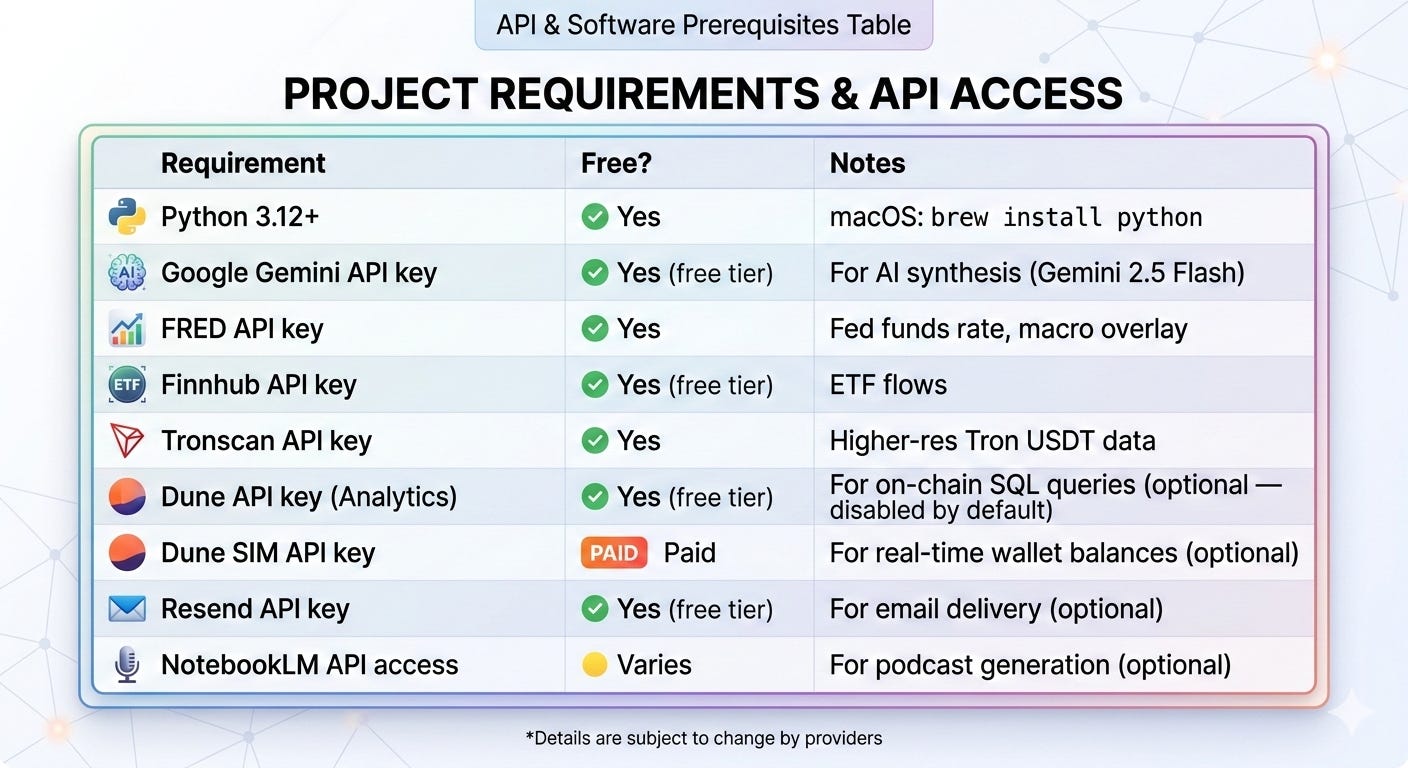

How You Can Build This Yourself

Here’s what you need to replicate it:

Many critical components (DeFiLlama, SEC EDGAR, all 20 news RSS feeds, Binance, Hyperliquid, Deribit, Pendle, Morpho, Snapshot, Polymarket, CoinShares, CFTC, Solscan, Curve) require no API key at all. The system degrades gracefully: missing keys just skip that section.

Setup in 5 Steps

# 1. Clone / download the project

cd “path/to/Stablecoin News Skill”

# 2. Create and activate virtual environment

python3 -m venv .venv

source .venv/bin/activate

# 3. Install dependencies

pip install -r requirements.txt

# 4. Copy and fill in API keys

cp .env.example .env

# Edit .env — at minimum add GOOGLE_API_KEY for AI synthesis

# 5. Run your first briefing

python run_briefing.pyAdd Shell Aliases for Daily Use

For quick access, add these to your ~/.zshrc (or ~/.bashrc):

alias brief=”cd \”/path/to/Stablecoin News Skill\” && python run_briefing.py”

alias report=”cd \”/path/to/Stablecoin News Skill\” && python run_research_report.py”Then, just type brief for your morning read or report for your weekly research note.

For Paid Subscribers: The Complete System

The real intelligence is not just the Python code but in how the AI is instructed and calibrated. These are the critical markdown files that define the AI’s role, its worldview, and its analytical standards.

Paid subscribers get every file the system runs on (the actual production files):

CLAUDE.md — Master instruction file with all analyst standards and escalation thresholds

skill.md — Full skill definition with peg deviation tiers, curator vault risk scoring, chain demand frameworks

workflows/daily_stablecoin_briefing.md — Complete daily SOP with data source TTLs and edge case handling

workflows/sell_side_research_report.md — Weekly research report SOP with quality checklist

workflows/daily_retrospective.md — Post-run quality review SOP

dune_mcp_skill.md — 10 pre-built SQL queries for live on-chain intelligence

shared-context/THESIS.md — The investment thesis all agents share

shared-context/FEEDBACK-LOG.md — Pre-seeded calibration rules from running this system live

Where This Is Going

The challenge with stablecoin intelligence comes from pulling many scattered data sources into one coherent view, not from a lack of available data. Most data sources you need already exist for free. DeFiLlama has perfect supply data. The CFTC publishes CoT reports every Friday. Deribit publishes the full options chain. SEC EDGAR is public. RSS feeds from every regulator that matters cost nothing.

What didn’t exist was a single system that pulls all of it together, interprets it correctly, and delivers a coherent daily output before the trading day opens.

That system exists now. And it gets better every day, every correction in the FEEDBACK-LOG sharpens the next run.

The next 18 months are the most consequential period in the history of this market. The GENIUS Act is in active negotiation. Circle is preparing to go public. BlackRock’s BUIDL is expanding to new chains. Kraken just secured Fed master account access. The regulatory and capital formation events compressing into 2025–2026 will define the winners for a decade.

If you’re serious about this market, every morning you don’t have a systematic read on it is a morning you’re behind.

What should I build next? Reply or comment with the most repetitive task in your investment workflow you’d pay to automate. The most requested one could become next week’s build.

Build fast. Invest smart. Automate everything in between.

If you want to work with me on AI automations: jurgis@evoaai.com or via LinkedIn.